Anthropic's Confusing Claude Subscription Policy, Explained

Anthropic updated its Claude Code docs to ban OAuth tokens from being used in third-party tools. The community exploded. Then Anthropic said nothing was changing.

Anthropic updated its Claude Code documentation to say that using OAuth tokens from Free, Pro, or Max accounts “in any other product, tool, or service” would violate its terms of service. The community exploded. Then Anthropic said nothing was actually changing.

That about sums it up, but the details matter.

What the docs said

The updated documentation spelled it out clearly: developers building “products or services that interact with Claude’s capabilities” should use API keys. “Anthropic does not permit third-party developers to offer Claude.ai login or to route requests through Free, Pro, or Max plan credentials on behalf of their users.”

This is, however, exactly how tools like NanoClaw run their onboarding flow. The Agent SDK, which underpins all of this, lets developers build agents with Claude Code at the core. Many of these tools rely on subscription credentials rather than API keys to function, because subscription plans offer significantly more value per dollar than API pricing.

The Claude community on Reddit and X read the update and drew the obvious conclusion: Anthropic was shutting this down.

u/zeetu

This still leaves it unclear if we can use Claude Code headless to power agents or building harnesses on top of headless CC.

u/shooshmashta

What if I created a bunch of tasks to complete in a ralph loop and just want claude to run through them? That seems like a good use case for cc max 20 if you ask me.

u/TallShift4907

You have to stay up all night, then.

u/shooshmashta

If anyone asks, I never sleep.

u/TwoSubstantial4710

It looks like the idea is to force users to pay for API credits on top of their subscriptions… I feel stupid for spending so much time developing a tool based around Claude now, wish I could have the last two months of my life back.

u/bhaktatejas

They somehow made this even more unclear than before. Some employees are saying agent SDK is acceptable usage.

What Anthropic actually meant

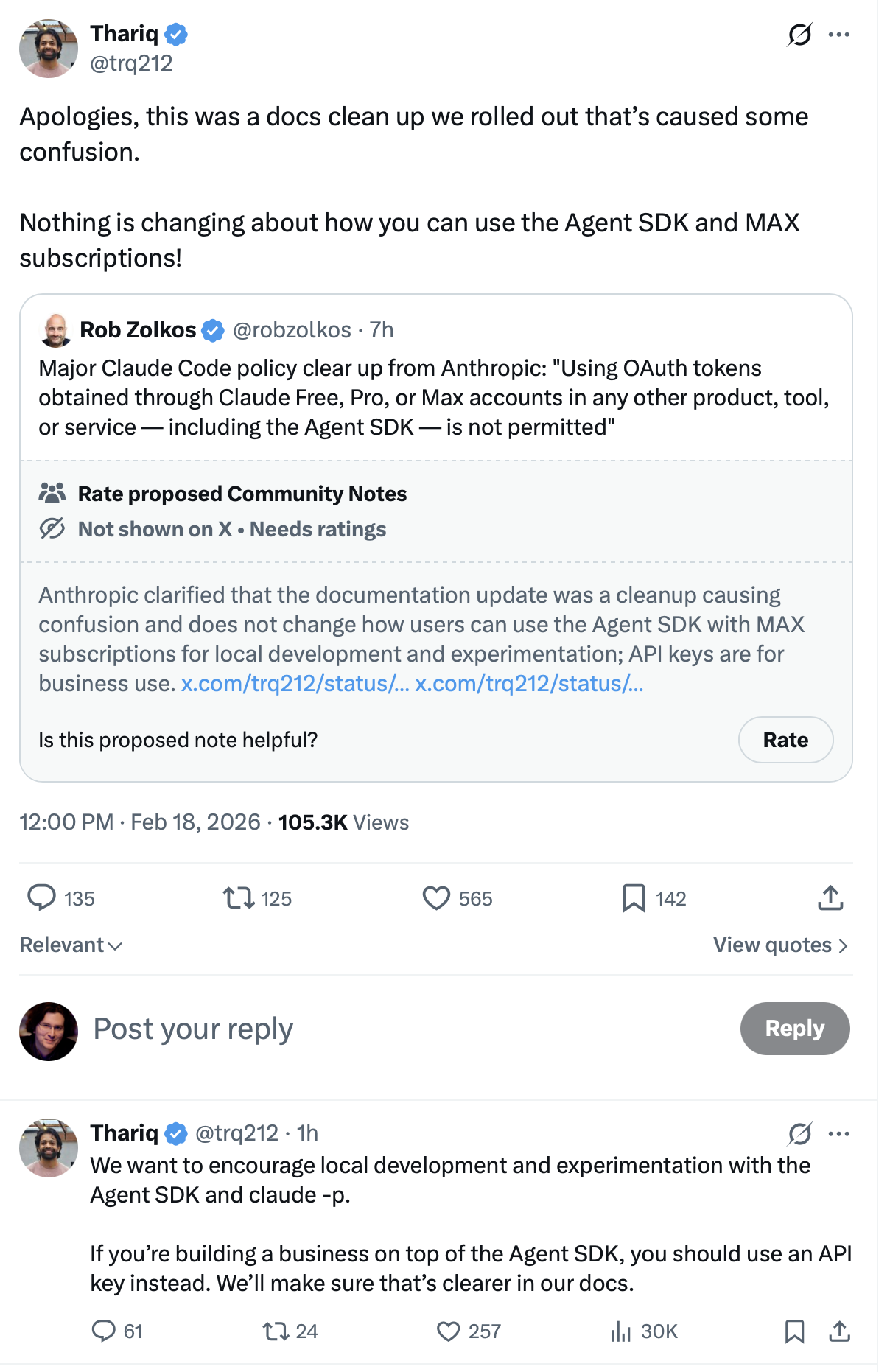

Thariq Shihipar, who works on Claude Code at Anthropic, stepped in with a clarification that got a fair amount of traction:

The short version: personal use and local experimentation are fine. If you’re building a business on the Agent SDK, use an API key. Nothing about that line was new, but the docs update made it sound like it was.

Anthropic confirmed the same when asked directly: “Nothing changes around how customers have been using their account and Anthropic will not be canceling accounts. The update was a clarification of existing language in our docs to make it consistent across pages.”

Why people didn’t buy it

The clarification was welcome, but trust had already taken a hit. A few things made this harder to dismiss as a simple docs cleanup.

Anthropic had previously asked Peter Steinberger, the founder of Clawdbot, to rename his project for legal reasons. It’s now called OpenClaw. Steinberger has since joined OpenAI. A few weeks earlier, Anthropic also banned tools like OpenCode from using its OAuth system entirely.

Put those together and a “docs cleanup” reads more like a pattern. The community noticed.

There’s also the underlying economics. Many users have long suspected that the value on Pro and Max plans is partly subsidized by the higher margins on API usage. The fear is simple: at some point, Anthropic tightens the screws, and the “too good to be true” era of flat-rate AI subscriptions ends. The docs update, even if technically a cleanup, fit that narrative too cleanly to be ignored.

Where things stand

Personal use and local development with the Agent SDK are explicitly fine, per Thariq’s post and Anthropic’s statement. If you’re building a product with paying users that routes through subscription credentials, Anthropic wants you on API keys.

The boundary between “personal experimentation” and “product” is still fuzzy, and Anthropic has not published clearer guidance. For now, the community is taking Anthropic at its word. But trust is easier to lose than to rebuild, and this was an unforced fumble on a topic that needed none.

Liked this? We send one like it every week.

Best papers, one email. No spam.